In today’s competitive digital world, SEO is not just about content and backlinks. Technical SEO plays a huge role in deciding whether your website ranks or disappears from search engines. One of the most ignored yet powerful technical SEO elements is the robots.txt file.

Many websites fail to rank not because their content is bad, but because search engines are unable to crawl their pages properly. This is where a Robots.txt Generator Tool becomes extremely important.

What Is a Robots.txt Generator Tool?

A Robots.txt Generator Tool is an online utility that helps website owners create a robots.txt file automatically, without needing technical or coding knowledge.

The robots.txt file tells search engine bots like :

- Googlebot

- Bingbot

- Yahoo Slurp

Which pages or folders they are allowed to crawl and index and which ones they should avoid.

Instead of manually writing confusing rules, a generator tool creates a clean, error-free robots.txt file based on your selections.

In simple words, this tool helps you control search engine crawlers safely and correctly.

Why Robots.txt Generator Tool Is Important?

The robots.txt file is a small text file placed in the root directory of your website.

Example:

yourwebsite.com/robots.txt

Its main purpose is to:

- Guide search engine crawlers

- Protect private or duplicate pages

- Improve crawl efficiency

- Support better SEO performance

Without a proper robots.txt file :

- Crawlers may waste crawl budget

- Sensitive pages may get indexed

- Important pages may be skipped

- SEO performance may suffer badly

How Does a Robots.txt Generator Tool Work?

A robots.txt generator tool follows a simple but powerful process :

1. User Input

The tool asks you to select :

- Which bots you want to target (Google, Bing, all bots)

- Which folders or pages to block

- Which pages to allow

- Sitemap URL (optional but recommended)

2. Rule Creation

Based on your input, the tool automatically creates :

User-agentAllowDisallowSitemap

With correct syntax and structure.

3. Error Prevention

The generator ensures:

- No syntax errors

- No accidental full site blocking

- SEO-friendly formatting

4. Download or Copy

You can:

- Copy the robots.txt code

- Download it as a file

- Upload it directly to your website

This process removes confusion and prevents costly SEO mistakes.

How to Make a Robots.txt Generator Tool

Building a Robots.txt Generator Tool may sound technical at first, but in reality, it is one of the simplest and most useful SEO tools you can create. With basic logic, a clean interface, and SEO understanding, you can build a tool that helps thousands of website owners—and even earn money from it.

Let’s break the process into simple, practical steps.

Step 1. Understand What the Tool Should Do

Before writing any code, you must clearly understand the purpose of a robots.txt generator tool.

The tool should:

- Help users create a valid robots.txt file

- Prevent common SEO mistakes

- Allow users to control search engine crawlers

- Generate clean, error-free syntax

In simple words, your tool should convert user choices into a proper robots.txt file.

Step 2. Decide the Core Features

A basic robots.txt generator tool should include:

- Select user-agent (Googlebot, Bingbot, All bots)

- Allow or disallow specific folders/pages

- Option to block admin or private sections

- Add sitemap URL

- Generate robots.txt instantly

- Copy or download option

Start simple. You can always add advanced features later.

Step 3. Design a Simple User Interface (UI)

Your tool’s success depends heavily on simplicity.

Recommended UI Elements:

- Dropdown for User-Agent selection

- Input box for disallowed directories (e.g.

/admin/,/login/) - Checkbox for common rules (block admin, allow CSS/JS)

- Input field for Sitemap URL

- “Generate Robots.txt” button

- Output preview box

Remember: Beginners should understand it without confusion.

Step 4. Write the Core Logic (How It Actually Works)

This is the heart of your robots.txt generator tool.

Basic Logic Flow:

- User selects options

- Tool collects inputs

- Tool converts inputs into robots.txt rules

- Rules are displayed as final output

Example Logic (Conceptual):

- If user selects “All Bots” →

User-agent: * - If user blocks admin →

Disallow: /admin/ - If sitemap is added →

Sitemap: https://example.com/sitemap.xml

Your tool simply combines these rules line by line.

You can build this logic using:

- HTML + JavaScript (best for beginners)

- PHP (for server-side processing)

- React / Vue (for advanced tools)

Step 5: Generate Error-Free Robots.txt Output

This step is critical for SEO safety.

Your tool must ensure:

- Correct syntax

- No extra spaces or broken rules

- Proper order of directives

- No accidental

Disallow: /

Always show a live preview, so users can see what they are generating.

Step 6. Add Download & Copy Options

To improve user experience, allow users to:

- Copy robots.txt with one click

- Download it as

robots.txtfile

This makes the tool more practical and professional.

Step 7. Test the Tool Thoroughly

Before publishing:

- Test multiple combinations

- Test empty inputs

- Test wrong inputs

- Ensure no SEO-damaging output is created

A small mistake in robots.txt can block an entire website, so testing is non negotiable.

Step 8. Make the Tool SEO-Friendly

To get traffic, your tool page must be optimised.

SEO Tips:

- Use keywords like:

- robots.txt generator

- create robots.txt file

- robots.txt for SEO

- Add a detailed explanation below the tool

- Include FAQs

- Add schema markup if possible

- Ensure fast loading speed

A good robots.txt generator tool can rank easily with proper SEO.

Step 9. Add Monetisation (Optional but Smart)

Once your tool starts getting traffic, you can monetise it.

Monetisation Ideas:

- Google AdSense

- Affiliate links (hosting, SEO tools)

- Premium version (advanced rules, multiple sites)

- Lead capture for SEO services

This turns a simple tool into a passive income asset.

Step 10. Plan for Future Improvements

To stay ahead, you can later add:

- AI-based rule suggestions

- Auto-detection of site structure

- Google Search Console integration

- Multi-language support

- Saved configurations for users

Start small, grow smart.

Final Thoughts

Creating a Robots.txt Generator Tool is one of the best entry level projects in the SEO tools space. It is easy to build, highly useful, SEO friendly, and monetisable.

If built correctly, this tool can-

- Help beginners avoid SEO disasters

- Improve crawl efficiency for websites

- Generate long-term organic traffic

- Earn passive income over time

Build it once, optimise it well, and it can serve users and you for years

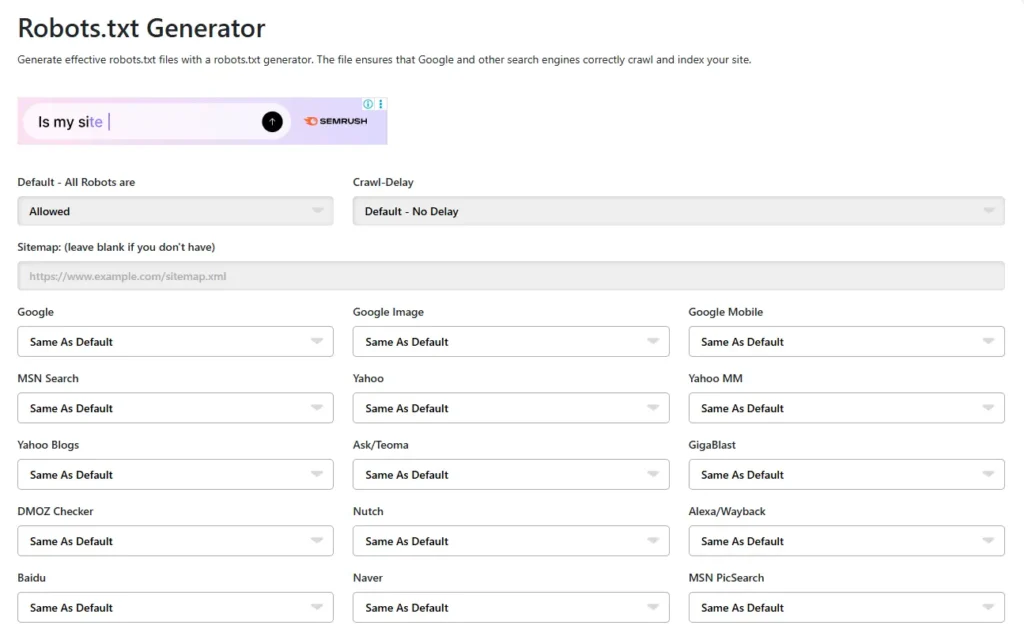

Here is an image given below which you can see and understand how your tool site will be made and you can also visit to this website and see how it works by clicking on this link-Robots.txt Generator Tool

Key Features of a Robots.txt Generator Tool

A good robots.txt generator tool offers several useful features:

1. Beginner-Friendly Interface

No coding knowledge required. Even beginners can create a file in seconds.

2. Multiple Search Engine Support

Create rules for:

- Googlebot

- Bingbot

- All user agents

3. Allow & Disallow Rules

Easily control:

- Admin pages

- Login pages

- Duplicate URLs

- Private folders

4. Sitemap Integration

Add your sitemap URL to help search engines discover pages faster.

5. Error-Free Output

The tool generates valid robots.txt syntax that search engines understand.

6. Instant Preview

Many tools show a live preview of the robots.txt file before downloading.

Why Should You Use a Robots.txt Generator Tool?

Manually creating a robots.txt file may look easy, but it’s risky.

Problems With Manual Robots.txt Files:

- One wrong slash can block your entire website

- Beginners often block important pages unknowingly

- Syntax mistakes can confuse search engines

- Copy-paste from other websites can break SEO

Why a Generator Tool Is Better:

- Safe and accurate

- Saves time

- Reduces SEO risks

- Perfect for non-technical users

Using a robots.txt generator tool is not optional anymore it’s a smart SEO move.

Benefits of Using a Robots.txt Generator Tool

1. Better Crawl Control

You decide exactly what search engines should crawl.

2. Improved SEO Performance

Search engines focus on important pages, improving rankings.

3. Protection of Sensitive Pages

Login pages, admin panels, and test pages stay hidden.

4. Faster Indexing

With sitemap integration, indexing becomes more efficient.

5. Reduced Crawl Budget Waste

Search engines don’t waste time on useless or duplicate pages.

6. Beginner Confidence

Even new website owners can manage technical SEO safely.

Common Mistakes a Robots.txt Generator Tool Helps Avoid

Without a generator, many people make serious mistakes like:

- Using

Disallow: /accidentally - Blocking CSS and JS files

- Forgetting to allow important folders

- Not adding a sitemap URL

A generator tool prevents these mistakes automatically.

How to Earn Money From a Robots.txt Generator Tool

A robots.txt generator tool is not just useful it’s monetisable.

1. Google AdSense

Display ads on your tool pages.

High intent SEO users = better RPM.

2. Affiliate Marketing

Promote:

- SEO tools

- Hosting services

- Website builders

Earn commissions on every sale.

3. Premium Version (Freemium Model)

Offer advanced features like:

- Multiple bot rules

- Bulk website support

- Save configurations

- Ad-free experience

4. Lead Generation

Collect emails and sell SEO services or courses.

5. Sponsored Tools & SaaS Promotions

SEO brands love niche traffic like this.

With consistent traffic, a simple tool can generate passive income for years.

Who Should Use a Robots.txt Generator Tool?

This tool is perfect for:

- Bloggers

- Affiliate marketers

- SEO beginners

- Developers

- Agency owners

- Niche website builders

Anyone who owns a website needs this tool.

SEO Potential of a Robots.txt Generator Tool

Keywords like:

- robots.txt generator

- create robots.txt file

- robots.txt for SEO

- robots.txt generator online

have high search intent and low competition, making this tool perfect for ranking and traffic growth.

If you want to learn how to create a Robots.txt Generator Tool,Then according to me, Skillgenerator is the best platform for learn this.

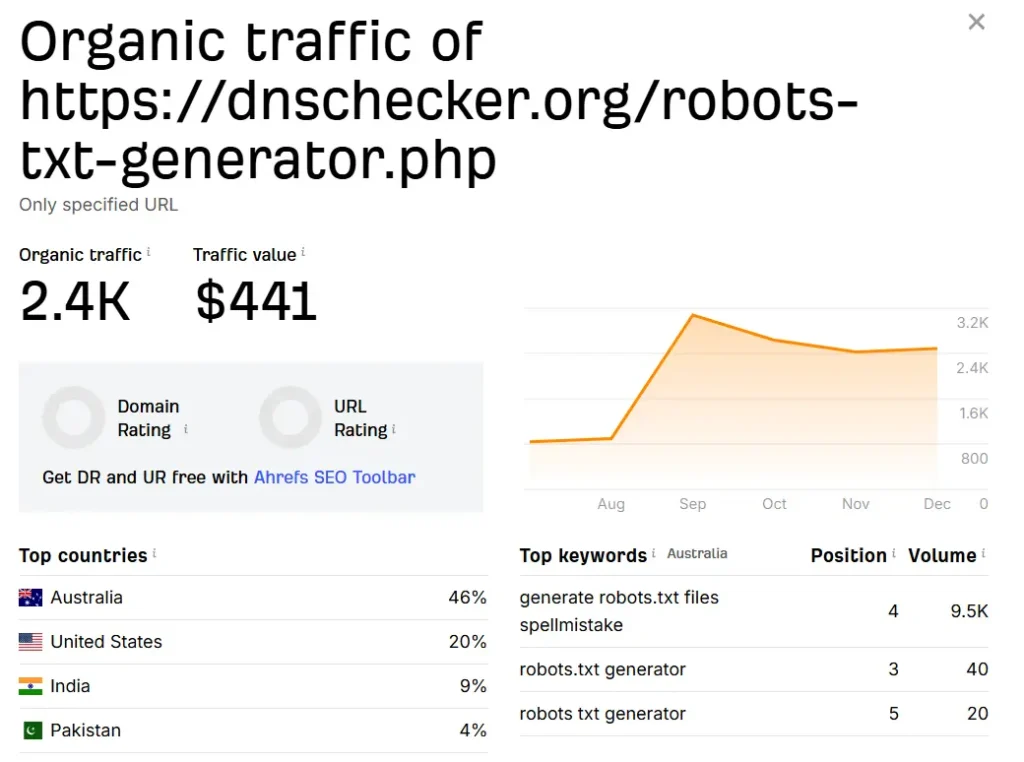

here is a live example through image of earning from Robots.txt Generator Tool-

Future of Robots.txt Generator Tools

The future of robots.txt generator tools looks very promising as the digital world continues to grow at a rapid pace. With millions of new websites being launched every year, managing search engine crawlers efficiently is becoming more important than ever. At the same time, SEO awareness is no longer limited to experts—bloggers, small business owners, and creators are now actively learning technical SEO, which increases the demand for simple and reliable tools like robots.txt generators.

In the coming years, these tools are expected to become smarter and more user-friendly. AI based recommendations may help users decide which pages should be crawled or blocked automatically, reducing the chances of costly SEO mistakes. Advanced generators could also auto-detect a website’s structure and suggest optimised rules based on real content and page types. Integration with platforms like Google Search Console may provide real-time insights, while multi-language support will make these tools accessible to a global audience. As long as search engines exist and websites depend on organic traffic, robots.txt will remain a critical SEO element, and robots.txt generator tools will continue to evolve as essential companions for modern website owners.

Conclusion: Why a Robots.txt Generator Tool Is a Smart Move

A Robots.txt Generator Tool is more than just a technical utility it is a powerful SEO asset.

It protects your website, improves crawl efficiency, prevents critical mistakes, and helps search engines understand your content better. For beginners, it removes fear. For professionals, it saves time. And for creators, it opens doors to passive income opportunities.

Whether you want to improve SEO, build a useful tool, or create a monetised website, a robots.txt generator tool is a smart, future-proof investment.

Build it once, optimise it well, and it can work for you even while you sleep